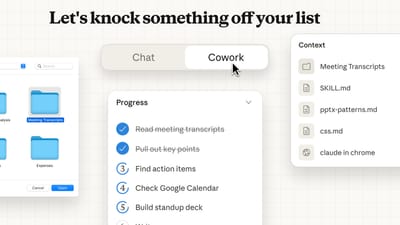

Anthropic Launches Claude Cowork Feature for MacOS Users

Anthropic introduced Cowork, a new capability for its Claude AI that lets subscribers grant the chatbot access to a MacOS folder. Users can chat with Claude to organize files, rename items, and generate spreadsheets or documents from the folder's contents. The feature, currently limited to Claude Max subscribers at $100 per month, also links to connectors for app integration and works with the Claude Chrome extension. Anthropic cautions that Cowork is in a research preview, recommending use only on non‑sensitive data and noting defenses against prompt‑injection attacks.