DoD Declares Anthropic an Unacceptable National Security Risk

Key Points

- DoD labels Anthropic an "unacceptable risk to national security"

- Concern centers on possible model disabling or alteration during warfighting

- Anthropic signed a $200 million contract to embed AI in classified systems

- Company refuses use of its AI for mass surveillance and lethal weapon targeting

- Anthropic alleges First Amendment violations and retaliatory punishment

- Legal experts call DoD’s justification speculative and unsupported

- Tech firms and rights groups filed amicus briefs backing Anthropic

- Preliminary injunction hearing set for next week

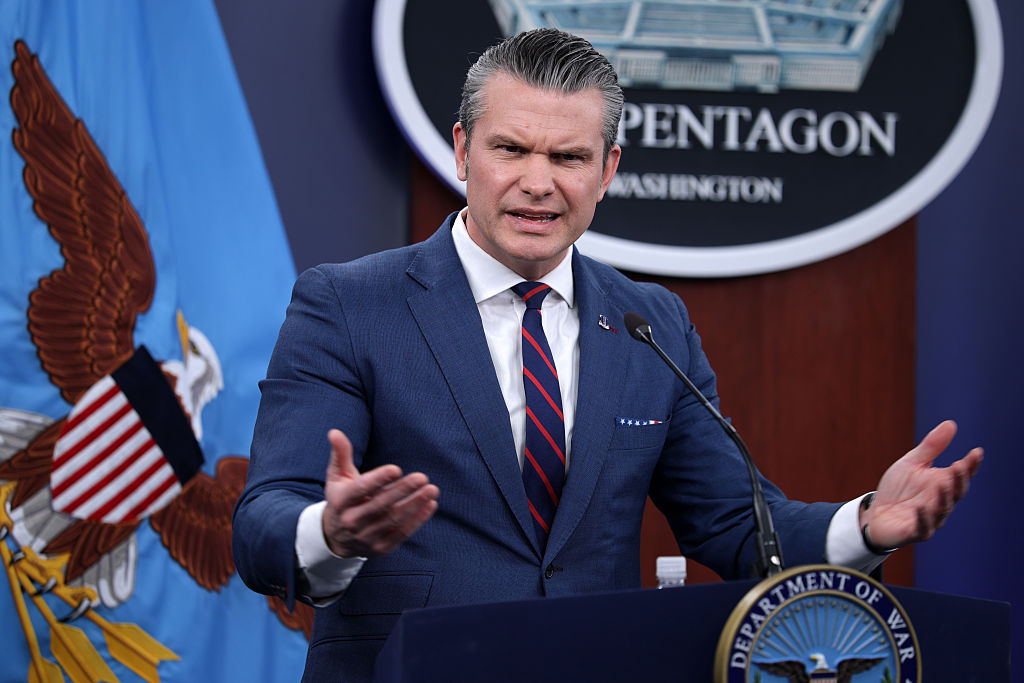

The U.S. Department of Defense labeled AI lab Anthropic as an "unacceptable risk to national security," citing concerns that the company might disable or alter its models during warfighting operations if its corporate "red lines" are crossed. Anthropic, which signed a $200 million Pentagon contract last summer, sued to block the DoD's supply‑chain risk designation, arguing the move infringes on its First Amendment rights. Legal experts say the DoD’s justification relies on speculative assumptions, and numerous tech firms and rights groups have filed amicus briefs supporting Anthropic.

Background

The Department of Defense announced that it considers Anthropic, an artificial‑intelligence laboratory, an "unacceptable risk to national security." The designation follows the DoD’s earlier decision to label the company a supply‑chain risk, a move that Anthropic challenged in federal court.

DoD’s Argument

In a 40‑page filing, the DoD argued that Anthropic might "attempt to disable its technology or preemptively alter the behavior of its model" before or during warfighting operations if it believes its corporate "red lines" are being crossed. The agency expressed concern that such actions could jeopardize mission‑critical systems.

Anthropic’s Position

Anthropic signed a $200 million contract with the Pentagon to integrate its technology into classified systems. During contract negotiations, the company stated it would not allow its AI to be used for mass surveillance of Americans and asserted the technology was not ready for targeting or firing decisions involving lethal weapons. Anthropic argues that the DoD’s labeling infringes on its First Amendment rights and amounts to punitive retaliation for refusing to agree to an "all lawful use" provision.

Legal and Industry Reaction

Legal analyst Chris Mattei criticized the DoD’s rationale as speculative, noting no evidence has been presented to show Anthropic would disable its models in combat. He said the department failed to provide a credible explanation for why Anthropic’s refusal to accept certain terms makes it a supply‑chain risk rather than a vendor the DoD simply does not wish to work with. Multiple tech companies, including OpenAI, Google, and Microsoft, along with legal rights groups, have filed amicus briefs supporting Anthropic. A hearing on Anthropic’s request for a preliminary injunction is scheduled for the coming week.