Anthropic Secures 3.5 GW of Google TPU Capacity via Broadcom, Revenue Run Rate Tops $30 B

Key Points

- Anthropic will access ~3.5 GW of Google TPU compute via Broadcom starting in 2027, adding to 1 GW for 2026.

- The agreement marks Anthropic's largest compute commitment and expands U.S. AI infrastructure under its $50 billion pledge.

- Broadcom acts as the silicon‑to‑workload layer and has a separate long‑term deal with Google for future TPU designs and networking through 2031.

- Anthropic’s revenue run‑rate has surpassed $30 billion, up from about $9 billion at the end of 2025.

- Series G funding raised $30 billion at a $380 billion valuation, boosting the customer base to over 1,000 enterprise users.

- Claude runs on Amazon Trainium, Google TPUs, and Nvidia GPUs, giving Anthropic a multi‑cloud hardware advantage.

- Broadcom shares rose roughly 3 % after the announcement; analysts project $21 billion in AI revenue from Anthropic in 2026.

- The deal reflects a broader compute arms race, with firms like SoftBank and Meta making similarly large infrastructure commitments.

Anthropic announced on April 6 that it will tap roughly 3.5 gigawatts of next‑generation Google Tensor Processing Unit (TPU) compute through Broadcom starting in 2027, adding to the 1 GW already supplied for 2026. The move backs the AI lab’s $50 billion pledge to expand U.S. AI infrastructure and comes as the company reports a revenue run‑rate exceeding $30 billion—more than triple its figure at the end of 2025. Broadcom’s role as the silicon‑to‑workload bridge and the scale of the deal underscore the accelerating compute arms race among AI firms.

Anthropic disclosed a multi‑gigawatt compute agreement that will give the company access to about 3.5 GW of Google’s next‑generation Tensor Processing Unit (TPU) capacity via Broadcom, beginning in 2027. The deal, announced on April 6, builds on the 1 GW of TPU power the firm already receives for 2026 and marks the largest infrastructure commitment in Anthropic’s history.

Chief financial officer Krishna Rao called the arrangement "our most significant compute commitment to date," emphasizing the company’s disciplined approach to scaling. Most of the new capacity will be deployed in the United States, extending Anthropic’s November 2025 promise to invest $50 billion in American AI computing infrastructure.

Broadcom’s expanding role

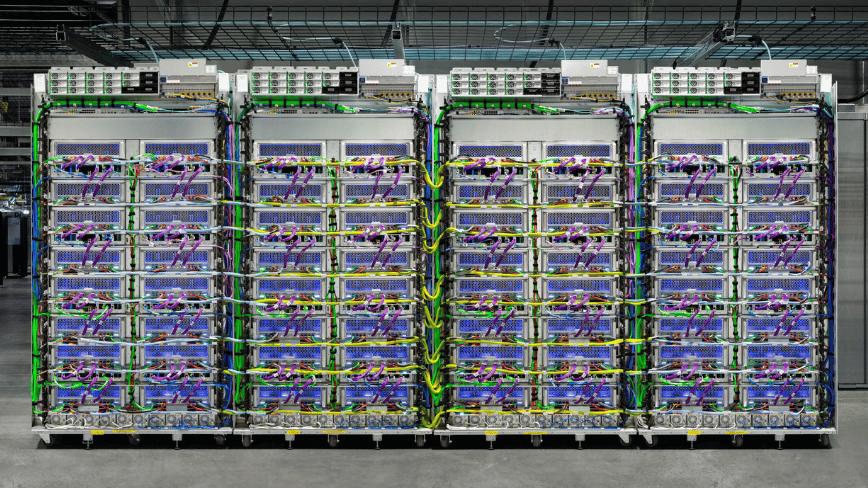

Under the agreement, Broadcom serves as the intermediary between Google’s custom silicon and Anthropic’s training and inference workloads. The chipmaker also signed a separate long‑term contract with Google to design future TPU chips and to supply networking and other components for Google’s AI data racks through 2031. Broadcom’s shares rose roughly 3 % in after‑hours trading after the announcement, reflecting investor interest in firms that sit at the physical layer of the AI stack.

Analysts at Mizuho projected that Broadcom could generate $21 billion in AI revenue from Anthropic in 2026, climbing to $42 billion in 2027. Those figures, while speculative, illustrate the financial weight of the commitment.

Broadcom’s involvement traces back to September 2025, when a mystery customer placed a $10 billion order for custom TPU racks—a deal later confirmed to be with Anthropic, followed by an additional $11 billion order in December.

Anthropic’s compute expansion dovetails with a dramatic surge in its commercial performance. The company reported a revenue run‑rate now above $30 billion, up from roughly $9 billion at the close of 2025. The jump follows a Series G financing round on February 12, 2026, which raised $30 billion at a $380 billion post‑money valuation. At that time, Anthropic counted more than 500 enterprise customers each spending over $1 million annually; that number has since doubled to exceed 1,000 customers.

Claude, Anthropic’s flagship model, runs on a multi‑vendor hardware strategy that includes Amazon’s Trainium chips, Google’s TPUs, and Nvidia GPUs. The model is the only frontier AI system available across Amazon Web Services, Google Cloud, and Microsoft Azure, giving Anthropic negotiating leverage and resilience against supply shocks.

Amazon remains a foundational partner. In late 2024, Anthropic named AWS its primary cloud and training provider, with Amazon committing $8 billion to the relationship. Project Rainier, an Anthropic super‑computer cluster in Indiana, operates roughly 500,000 Trainium 2 chips and is slated to surpass one million chips by the end of 2025.

The U.S. focus of the new capacity aligns with the Trump administration’s AI Action Plan, which prioritizes domestic compute resources. Anthropic’s $50 billion infrastructure pledge, initially built with UK‑based Fluidstack for data‑center sites in Texas and New York, will see the majority of the Broadcom‑sourced TPU power added through 2027.

The deal is a clear data point in the broader compute arms race that has seen SoftBank commit $40 billion to support OpenAI and Meta sign a $27 billion infrastructure agreement with Nebius. As AI models grow more demanding, labs like Anthropic are forced to secure financing and partnerships that resemble utility‑scale projects.

For Broadcom, the partnership cements its status as a load‑bearing element of the AI infrastructure stack, positioning it alongside industry giants such as Nvidia, which continues to dominate accelerator markets.