Anthropic Introduces AI-Powered Code Review Tool for Claude Code

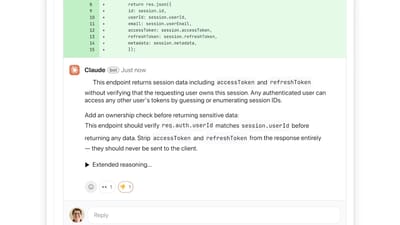

Anthropic has launched Code Review, an AI-driven reviewer built into its Claude Code platform. Designed for enterprise customers, the tool automatically scans pull requests, highlights logical errors, and offers actionable fixes directly in GitHub. By focusing on high‑priority bugs rather than style issues, Code Review aims to reduce the bottleneck caused by the surge of AI‑generated code, helping large development teams ship faster and with fewer defects.