Anthropic Introduces AI-Powered Code Review Tool for Claude Code

Key Points

- Anthropic launches Code Review, an AI‑driven reviewer for Claude Code.

- Tool automatically scans pull requests and highlights logical errors.

- Integrates directly with GitHub and provides step‑by‑step explanations.

- Uses a multi‑agent system to prioritize and aggregate findings.

- Offers light security analysis with optional deeper checks.

- Pricing is token‑based, estimated at $15‑$25 per review.

- Targeted at enterprise customers handling high volumes of AI‑generated code.

Anthropic has launched Code Review, an AI-driven reviewer built into its Claude Code platform. Designed for enterprise customers, the tool automatically scans pull requests, highlights logical errors, and offers actionable fixes directly in GitHub. By focusing on high‑priority bugs rather than style issues, Code Review aims to reduce the bottleneck caused by the surge of AI‑generated code, helping large development teams ship faster and with fewer defects.

Background

Developers rely on peer feedback to catch bugs early, maintain consistency, and improve overall software quality. The rise of “vibe coding”—using AI tools that generate large amounts of code from plain‑language instructions—has accelerated development but also introduced new bugs, security risks, and poorly understood code. Anthropic’s Claude Code has dramatically increased code output, leading to a surge in pull‑request reviews that can slow down shipping.

Introducing Code Review

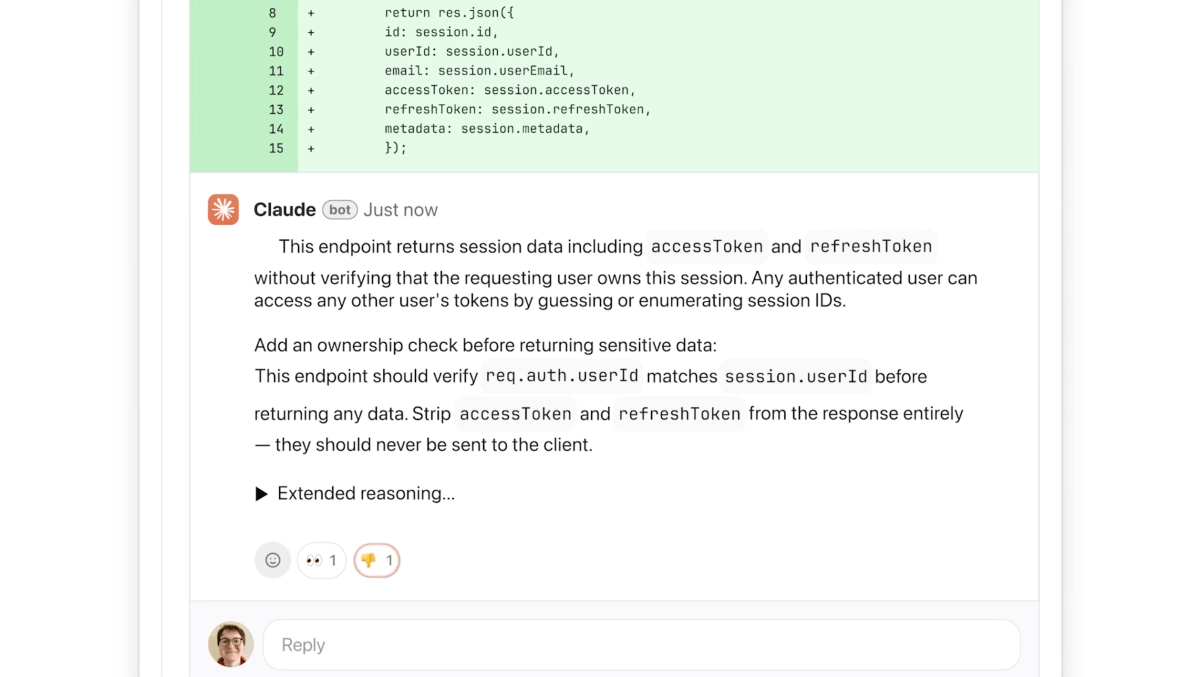

In response, Anthropic unveiled Code Review, an AI reviewer that integrates with Claude for Teams and Claude for Enterprise. The product automatically analyzes every pull request, leaves comments directly on the code, and explains potential issues with step‑by‑step reasoning. It prioritizes logical errors over style concerns, labeling severity with colors—red for highest severity, yellow for potential problems, and purple for issues tied to existing code or historical bugs.

How It Works

Code Review employs a multi‑agent architecture where several agents examine the codebase from different perspectives. A final agent aggregates findings, removes duplicates, and ranks the most important issues. The tool offers a light security analysis, and engineering leads can add custom checks based on internal best practices. Claude Code Security, a newer feature, provides deeper security analysis when needed.

Integration and Pricing

The system integrates with GitHub, allowing developers to enable Code Review by default for every engineer on a team. Pricing is token‑based and varies with code complexity; Anthropic estimates each review will cost between $15 and $25 on average.

Market Impact

Anthropic targets large‑scale enterprise users such as Uber, Salesforce, and Accenture—companies already using Claude Code and now seeking help managing the volume of pull requests generated by AI. The company expects the tool to enable enterprises to build faster and with fewer bugs, addressing market demand for reliable AI‑assisted development.