Your brain can spot AI voices even when you can’t

Key Points

- Listeners struggled to consciously differentiate real speech from AI‑generated speech.

- EEG recordings showed distinct neural responses within milliseconds of hearing each voice.

- Three neural response peaks appeared at roughly 55 ms, 210 ms, and 455 ms after stimulus onset.

- Acoustic analysis identified differences in the 5.4‑11.7 Hz modulation range between real and synthetic speech.

- The brain’s early detection occurs before any conscious decision is made.

- Findings suggest potential for training programs that align neural cues with conscious awareness.

- Research highlights a gap between unconscious perception and conscious judgment of deepfake audio.

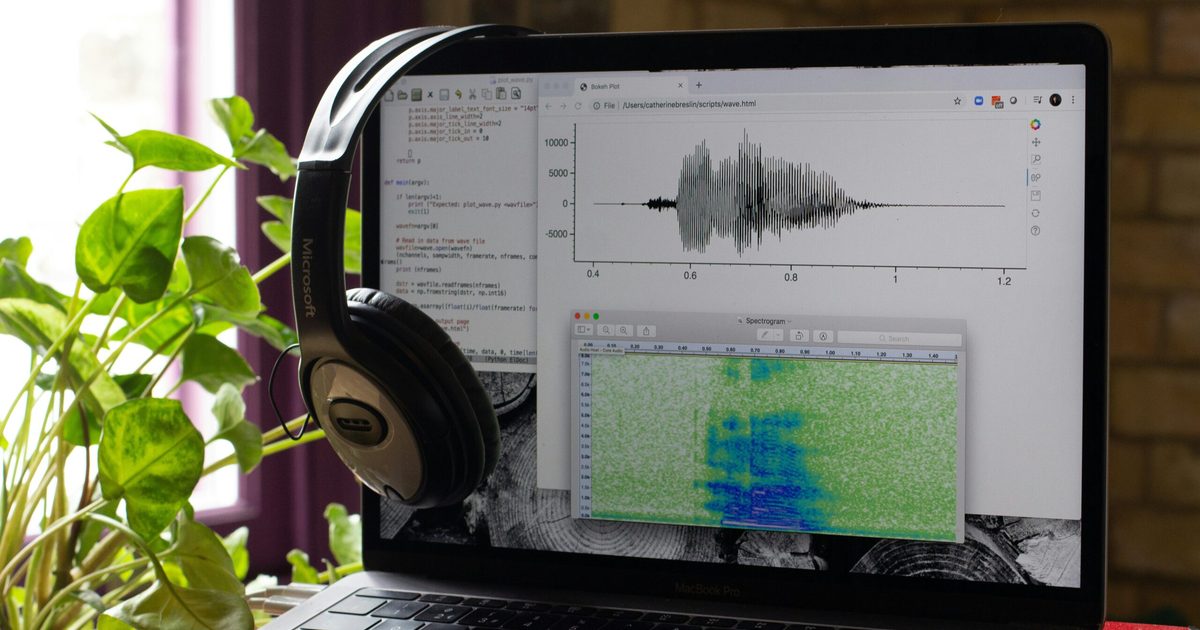

Researchers from Tianjin University and the Chinese University of Hong Kong found that while listeners often fail to consciously distinguish real human speech from synthetic AI voices, their brains begin to tag subtle acoustic differences after brief exposure. Using EEG caps, the study revealed early neural responses that separate real and AI speech within milliseconds, highlighting a gap between unconscious perception and conscious decision‑making. The findings suggest the auditory system is already adapting to AI‑generated voices, offering hope for future tools that could help people translate these neural cues into reliable detection of deepfake audio.

Study Overview

Scientists from Tianjin University and the Chinese University of Hong Kong tested a group of listeners on their ability to tell real human speech apart from AI‑generated speech. Participants were asked to press a button indicating whether each voice was real or fake. The test included sentences spoken by actual people, basic synthetic speech, and a more refined AI voice that sounded very natural.

Conscious Performance

Despite brief training designed to improve detection, listeners consistently struggled to make correct judgments. The majority of responses were incorrect, showing that conscious perception alone was insufficient for reliable identification of AI‑cloned voices.

Neural Findings

While participants performed poorly, EEG caps recorded their brain activity throughout the experiment. After just twelve minutes of training, distinct neural patterns began to emerge. The brain showed three separate response peaks—around fifty‑five milliseconds, two hundred ten milliseconds, and four hundred fifty‑five milliseconds after hearing each voice. These early‑stage signals occurred well before any conscious decision was made, indicating that the auditory system was silently processing subtle differences between real and synthetic speech.

Acoustic Differences

Further acoustic analysis revealed that real and AI speech differed in the 5.4 to 11.7 Hz modulation range, a frequency band linked to how the brain tracks rapid speech details such as phonemes and syllable onsets. Even the most natural‑sounding AI voices did not perfectly replicate these micro‑variations, providing a physical basis for the brain’s early detection.

Implications

The research suggests that humans are not defenseless against voice‑cloning fraud. The brain’s hardware is already capable of recognizing subtle cues, but the conscious mind has yet to connect those cues to the notion of “fake.” Future training programs could bridge this gap by teaching listeners to focus on the specific acoustic fingerprints their auditory system already detects. Such targeted education could improve public awareness and resilience against deepfake audio threats.

Conclusion

Overall, the study demonstrates a clear disconnect between unconscious neural processing and conscious judgment when it comes to AI‑generated speech. While the conscious mind may be fooled, the brain is quietly doing its homework, laying the groundwork for more effective detection tools and training methods in the future.