Trump Administration Proposes Federal AI Framework That Preempts State Laws

Key Points

- Federal AI framework seeks a single national standard, preempting state AI laws.

- Seven objectives focus on innovation, scaling, and a light‑touch regulatory approach.

- State authority limited to general laws; AI development deemed an interstate issue.

- Responsibility for child safety shifted to parents; Congress urged to require platform safeguards.

- Liability shield proposed for developers against state penalties for third‑party misconduct.

- Framework lacks clear enforcement mechanisms and independent oversight.

- Industry praises uniform standards; critics warn it curtails state experimentation.

- Executive order earlier directed agencies to challenge “onerous” state AI regulations.

The Trump administration unveiled a legislative framework aimed at creating a single, nationwide AI policy. The plan would centralize authority in Washington, preempting state AI regulations while emphasizing a light‑touch, innovation‑focused approach. It assigns greater responsibility for child safety to parents, calls on Congress to require platforms to add safeguards against sexual exploitation, and seeks to shield developers from state liability. Critics argue the proposal limits state experimentation and lacks clear enforcement mechanisms, while industry leaders praise the promise of a uniform national standard for startups.

Federal Initiative to Standardize AI Policy

The Trump administration announced a legislative framework intended to establish a singular AI policy for the United States. According to a White House statement, a uniform national approach is essential because a patchwork of conflicting state laws would undermine American innovation and the nation’s ability to lead in the global AI race.

Key Objectives and Innovation Focus

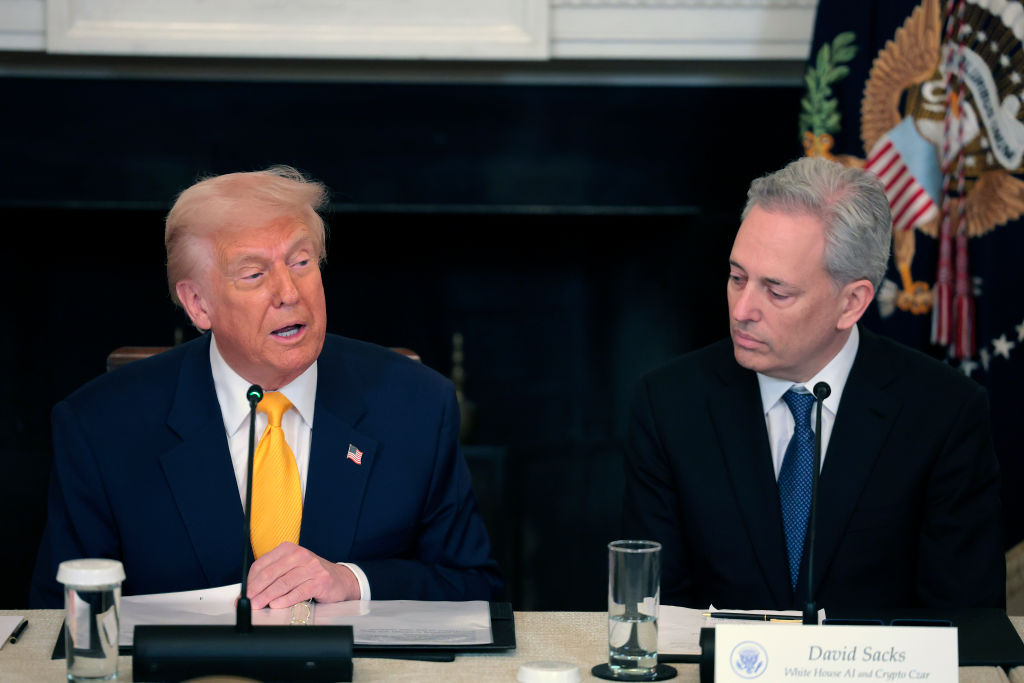

The framework outlines seven key objectives that prioritize innovation and the scaling of AI technologies. It proposes a centralized, “minimally burdensome national standard” that would override stricter state‑level regulations. This approach aligns with the administration’s broader push to remove barriers to innovation, a stance championed by White House AI czar and venture‑capitalist David Sacks.

Preemption of State Authority

Under the proposal, state authority would be limited to general laws such as fraud, child protection, zoning, and the use of AI by state agencies. The framework explicitly states that AI development is an “inherently interstate” issue tied to national security and foreign policy, and therefore should not be regulated by individual states. It also provides a liability shield for developers, preventing states from penalizing them for third‑party unlawful conduct involving their models.

Parental Responsibility and Child Safety

The plan places significant responsibility for child safety on parents rather than on platforms. It calls on Congress to require AI companies to implement features that “reduce the risks of sexual exploitation and harm to minors,” but does not define concrete, enforceable requirements. The framework suggests tools such as account controls to help parents protect their children’s privacy and manage device use.

Copyright and Content Moderation

Regarding intellectual property, the framework seeks a middle ground by citing “fair use” as a justification for training AI on existing works. In the area of content moderation, the proposal focuses on preventing government‑driven censorship rather than imposing platform‑level safeguards. It urges Congress to stop the government from coercing AI providers to ban or alter content based on “partisan or ideological agendas” and to provide legal redress for affected Americans.

Criticism and Industry Reaction

Critics argue that the framework narrows the space for states to act as early regulators and lacks clear mechanisms for oversight, liability, or enforcement of novel harms. They point to state initiatives such as New York’s RAISE Act and California’s SB‑53, which aim to ensure large AI companies adopt publicly documented safety protocols. Brendan Steinhauser, CEO of the Alliance for Secure AI, accused the proposal of serving big‑tech interests at the expense of ordinary Americans.

Conversely, industry figures praised the move. Teresa Carlson, president of the General Catalyst Institute, said the framework offers startups a clear national standard that enables rapid building and scaling without navigating a patchwork of state laws.

Context and Ongoing Legal Battles

The framework follows an executive order signed three months earlier that directed federal agencies to challenge state AI laws and gave the Commerce Department 90 days to compile a list of “onerous” state regulations, a list that has not yet been published. The order also instructed the administration to work with Congress on a uniform AI law. Meanwhile, AI company Anthropic is suing the government over a Department of Defense designation as a supply‑chain risk, alleging a violation of First Amendment rights. President Trump has publicly labeled Anthropic’s CEO Dario Amodei as “woke” and “radical leftist.”

Potential Implications

If enacted, the framework would centralize AI policymaking in Washington, limit state experimentation, and shift much of the responsibility for child safety to parents. While the industry welcomes the prospect of a clear national standard, advocates for stronger consumer protections and state‑level innovation warn that the proposal may leave significant gaps in oversight, accountability, and enforcement.