Google, Microsoft and xAI Agree to Give U.S. Government Early Access to AI Models

Key Points

- Google, Microsoft and xAI have signed contracts to provide early‑access AI models to the U.S. Commerce Department.

- The Center for AI Standards and Innovation will evaluate the models with reduced or disabled safeguards.

- Agreements aim to assess national‑security risks such as disinformation generation and potential weaponization.

- The deals follow reports that the Trump administration is considering tighter AI oversight and a review working group.

- Industry cooperation may help firms avoid being labeled supply‑chain risks like Anthropic faced earlier.

- Critics worry that weakened safeguards could expose vulnerabilities, while supporters stress the need for rigorous measurement.

Google, Microsoft and Elon Musk's xAI have signed agreements that let the Commerce Department’s Center for AI Standards and Innovation evaluate their next‑generation artificial‑intelligence systems before they are released publicly. The deals, announced a day after reports that the Trump administration was weighing tighter AI oversight, call for the companies to provide models with reduced or disabled safeguards so federal analysts can probe national‑security risks. CAISI director Chris Fall said the collaborations will expand the government’s ability to measure frontier AI and protect U.S. interests.

In a move that could reshape how the United States monitors emerging artificial‑intelligence technology, Google, Microsoft and xAI have each signed contracts to hand early versions of their AI models to the Commerce Department’s Center for AI Standards and Innovation (CAISI). The agreements, disclosed on May 5, give federal analysts the chance to examine cutting‑edge systems before they reach the market.

CAISI, the agency tasked with developing measurement standards for advanced AI, will receive the models with either reduced safeguards or, in some cases, safeguards turned off altogether. That level of access allows the department to test the systems for capabilities that could affect national security, from sophisticated data‑analysis to potential weaponization.

"Independent, rigorous measurement science is essential to understanding frontier AI and its national‑security implications," CAISI director Chris Fall told The Wall Street Journal. "These expanded industry collaborations help us scale our work in the public interest at a critical moment." The statement underscores the administration’s push to stay ahead of rapid AI advancements that could outpace existing regulatory frameworks.

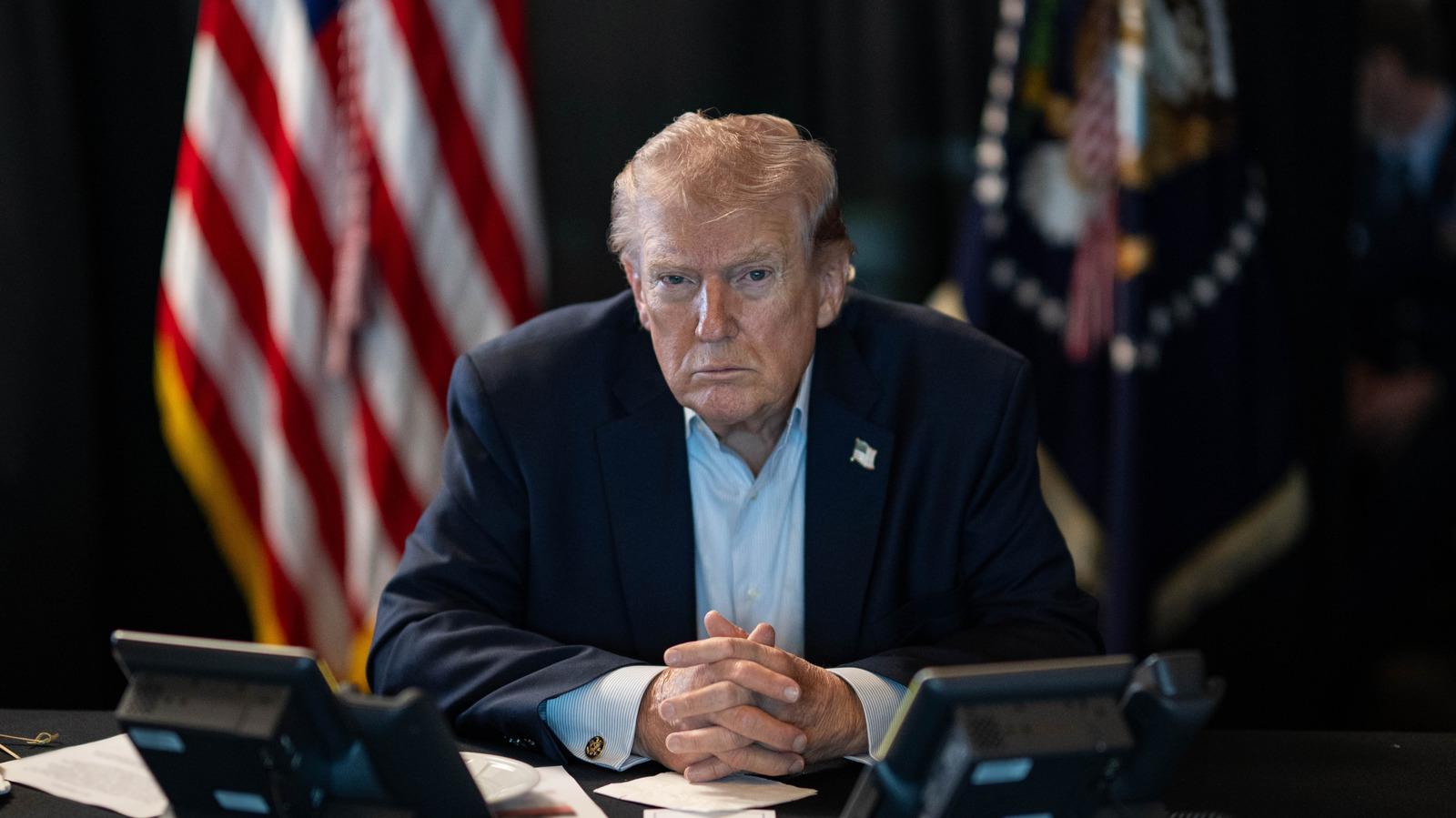

The timing of the agreements is notable. A day earlier, The New York Times reported that the Trump administration was weighing whether to tighten oversight of the AI industry. The White House had been considering a working group empowered to review new models before they are publicly released, a shift from the more hands‑off approach outlined in the president’s AI Action Plan.

Industry insiders say the contracts are a pragmatic response to growing pressure. Companies that resist providing unfettered access risk being labeled supply‑chain risks, as happened with Anthropic after the Pentagon raised concerns about the firm’s safeguards against mass surveillance and autonomous weapons. By cooperating, Google, Microsoft and xAI sidestep potential black‑listing and demonstrate compliance with federal expectations.

Under the deals, the three firms will hand over their latest models to CAISI for testing. The agency will evaluate the systems for a range of security‑related attributes, including the ability to generate disinformation, evade detection, or be repurposed for military applications. The assessments will inform guidelines that could shape future procurement decisions across federal agencies.

Critics of the approach warn that granting the government access to AI models with weakened safeguards could expose vulnerabilities or create a precedent for broader surveillance. Supporters argue that without such transparency, the United States could fall behind rivals in understanding the threats posed by frontier AI.

While the agreements signal a more collaborative stance between the tech sector and the federal government, they also raise questions about the balance between innovation and security. The administration’s next steps—potentially formalizing a working group with review authority—will determine how far the oversight regime extends.

For now, the three tech giants appear willing to place their most advanced models under government scrutiny, a decision that may set the tone for future industry‑government partnerships in the AI arena.