Google Gemini Brings Task Automation to Samsung Phones

Key Points

- Google Gemini now automates tasks on Samsung phones via a virtual app window.

- Initial support includes food‑delivery and rideshare services.

- The assistant asks clarifying questions before completing actions.

- Users can watch the automation process and intervene at any time.

- Early testing shows successful handling of simple and nuanced requests.

- The feature is currently in beta and will expand to more apps.

- Automation aims to reduce user effort while maintaining control and safety.

Google and Samsung have introduced a new Gemini feature that lets users automate tasks in apps through simple prompts. Starting with food delivery and rideshare services, the assistant can navigate app interfaces in a virtual window, fill in details, and pause before final confirmation, giving users control over each step. Early testing shows the system can handle requests like ordering a ride to the airport or a coffee and croissant, asking clarifying questions when needed. The rollout marks a significant step forward for AI assistants on mobile devices.

Gemini Task Automation Arrives on Samsung Devices

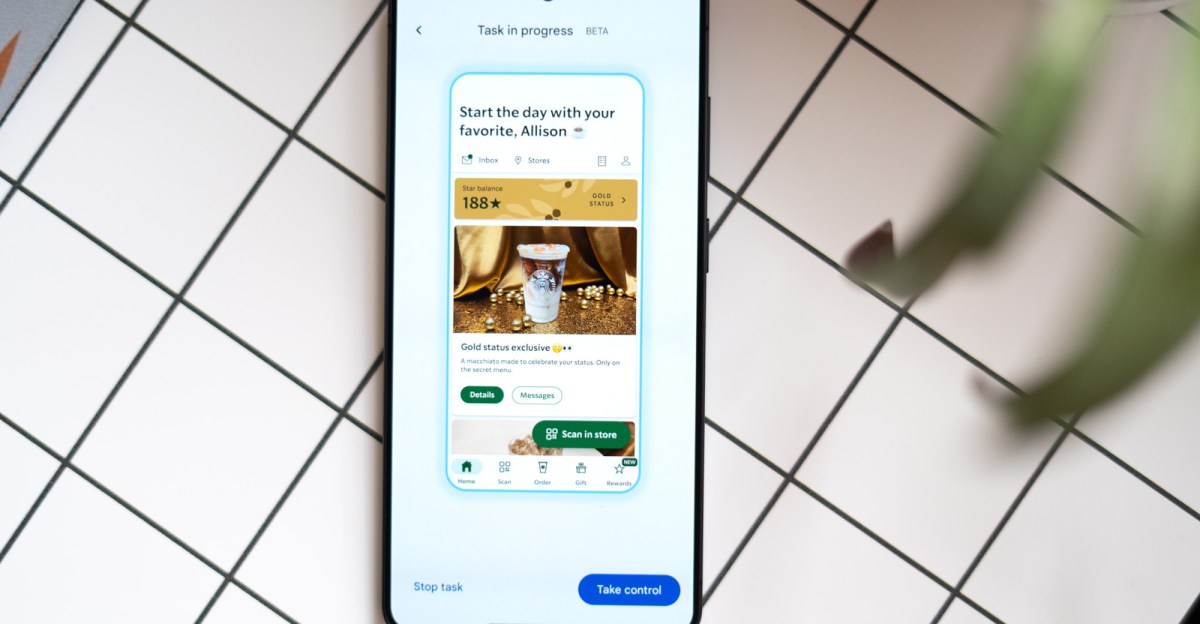

Google and Samsung have rolled out a new capability for the Gemini AI model that enables task automation directly on Samsung smartphones. The feature works by opening a virtual window of the targeted app and performing actions on the user’s behalf, all driven by natural‑language prompts. Initial support focuses on food‑delivery and rideshare applications, allowing users to place orders or request transportation without manually navigating each screen.

During early testing, the assistant responded to a straightforward request to order an Uber to the airport. It first asked which airport was intended, then proceeded to add the destination, skip unnecessary steps, and pause before final submission. This pause gave the user a chance to review details and approve the request, ensuring transparency and control.

More complex prompts, such as ordering a coffee and a pastry, required additional input. The system scrolled through menu options, identified the requested drink, and asked whether the croissant should be warmed. With the user’s clarification, it completed the order preparation steps, demonstrating an ability to handle nuanced preferences.

The automation process is visible to the user, who can intervene at any point to stop or adjust the workflow. This level of observability is intended to build trust, as the assistant operates in a way that feels like watching the phone use itself.

While the feature is still in beta, early impressions suggest it delivers on promises that have long been associated with AI assistants—performing multi‑step tasks with minimal user effort. The developers plan to expand the range of supported apps and continue testing the system’s robustness across different scenarios.

Implications for Mobile AI Assistants

The introduction of Gemini‑driven task automation signals a shift toward more proactive and capable digital assistants on mobile platforms. By handling interactions within third‑party apps, the technology moves beyond simple voice commands to genuine workflow automation. This could reshape how users engage with everyday services, reducing friction and freeing time for other activities.

Industry observers note that the ability to pause before final actions addresses privacy and safety concerns, giving users final say over transactions. As the feature matures, it may open the door for broader integration with a variety of services, potentially setting a new standard for AI‑enhanced smartphones.