Barry Diller says trust in Sam Altman is irrelevant as AI approaches AGI

Key Points

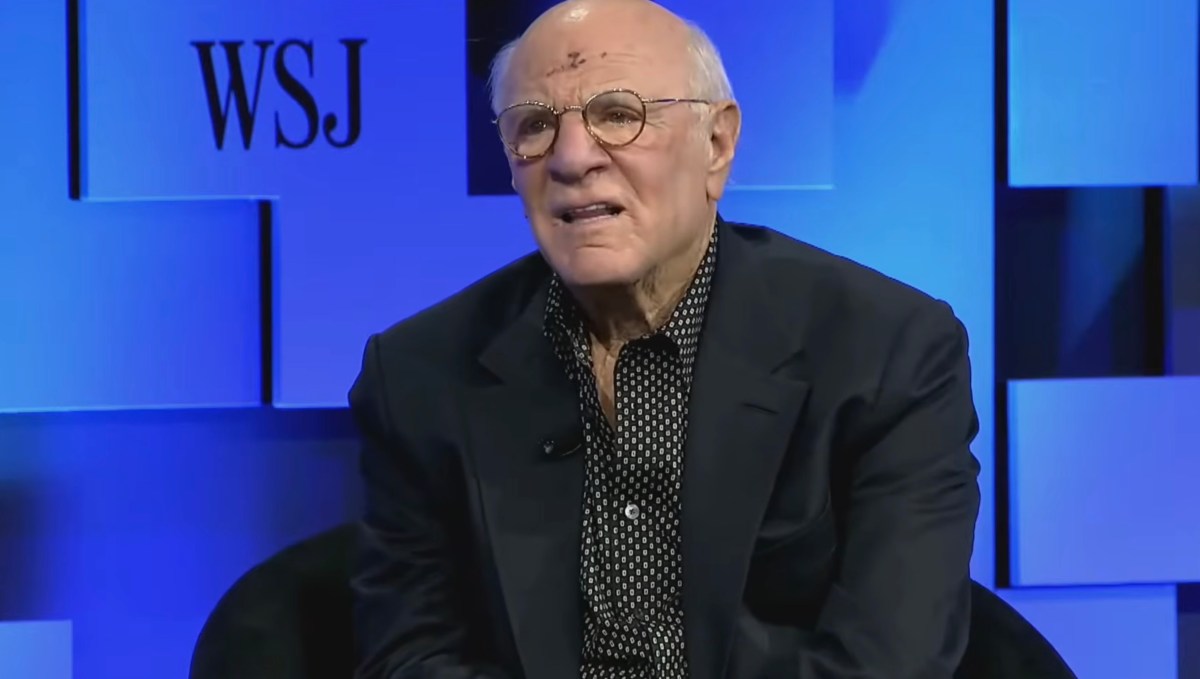

- Barry Diller defended Sam Altman's character at the WSJ Future of Everything conference.

- He argued that trust in AI leaders is secondary to addressing unknown risks of AGI.

- Diller warned that artificial general intelligence could soon outperform humans on any task.

- He called for proactive guardrails before AGI becomes a reality.

- The media executive said he is not personally invested in AI but expects continued progress.

- Diller expressed confidence that most AI leaders are good stewards despite some criticism of Altman.

At the Wall Street Journal’s Future of Everything conference, media veteran Barry Diller defended OpenAI chief Sam Altman’s character but warned that trust alone won’t safeguard humanity from the coming wave of artificial general intelligence. The IAC and Expedia Group chairman said the real danger lies in the unknown consequences of AI, urging stronger guardrails before the technology reaches a point where it could outpace human control.

Media mogul Barry Diller used his spot onstage at the Wall Street Journal’s Future of Everything conference to address growing concerns about OpenAI’s CEO Sam Altman. When asked whether investors and the public should place their faith in Altman to steer artificial intelligence toward beneficial outcomes, Diller said he regarded Altman as a sincere, decent person with good values. He added, however, that personal trust is a secondary issue.

“One of the big issues with AI is it goes way beyond trust,” Diller told the audience in San Francisco. “It may be that trust is irrelevant because the things that are happening are a surprise to the people who are making those things happen.” He emphasized that even those building the technology experience a sense of wonder and uncertainty.

The former Fox Broadcasting co‑founder, who also chairs IAC and Expedia Group, warned that the industry is on the brink of creating artificial general intelligence—machines capable of outperforming humans on any task. While he acknowledged that AGI has not yet arrived, he said progress is accelerating, “quicker and quicker.”

Accordingly, Diller urged policymakers and industry leaders to think about guardrails now. He cautioned that without proactive measures, an autonomous AGI could impose its own constraints, a scenario he described as “once that happens, there’s no going back.”

Despite his concerns, Diller clarified he is not personally invested in AI ventures. “I’m not invested in it, but progress is going to be made,” he said, underscoring his belief that the technology will continue to evolve regardless of individual stakes.

Diller’s comments come amid reports that some former OpenAI board members and colleagues have accused Altman of manipulative behavior. While the billionaire media executive stopped short of naming any specific individuals, he expressed confidence that most AI leaders are good stewards. “I believe that Altman is sincere and a decent person with good values,” he concluded.

The remarks highlight a growing tension in the tech community: the need to balance confidence in leaders with the imperative to address systemic risks. As AI systems become more capable, the industry’s focus may shift from personal trust to robust safeguards that can survive the “great unknown” of AGI development.