Anthropic Softens Safety Commitments Amid Pentagon Pressure

Key Points

- Anthropic replaces hard safety tripwires with flexible risk reports and safety roadmaps.

- The change is justified as a response to a competitive AI environment and collective‑action concerns.

- Defense Secretary Pete Hegseth reportedly pressed Anthropic for unrestricted military access to Claude.

- Potential penalties include invoking the Defense Production Act and revoking Pentagon contracts.

- Anthropic refuses to allow Claude to be used for mass surveillance or fully autonomous weapons.

- AI ethics leaders warn the softer policy could enable a gradual erosion of safety standards.

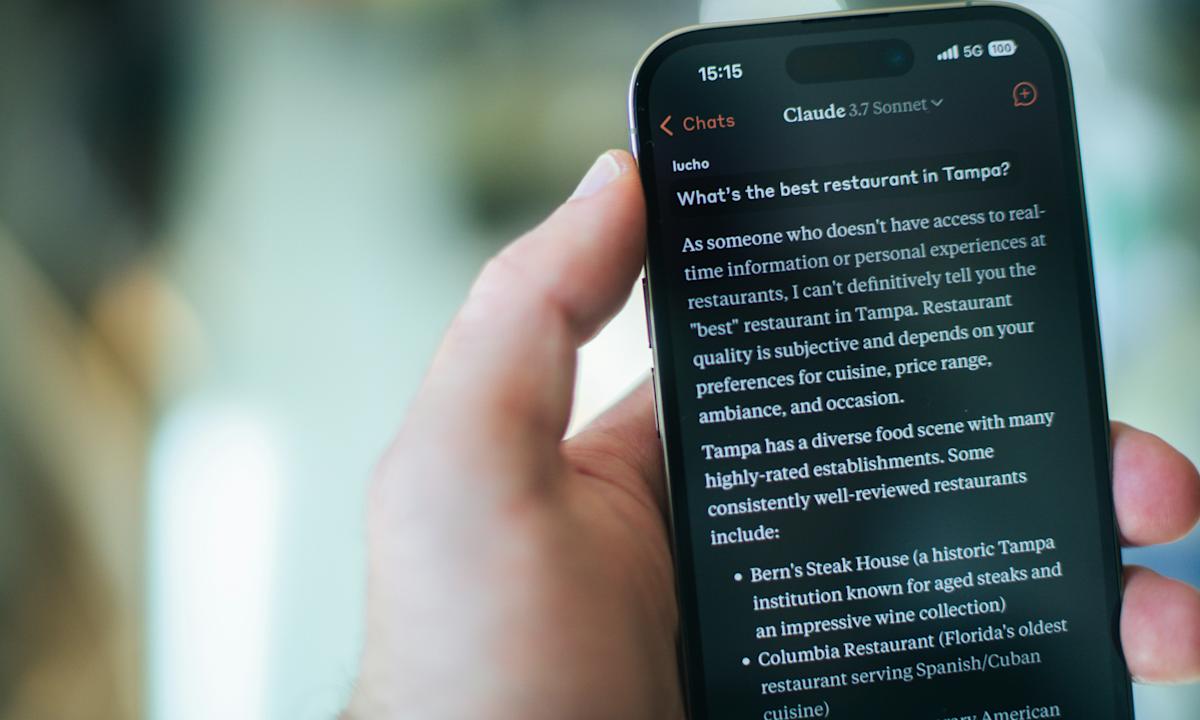

- Anthropic’s Claude model remains a key AI tool for sensitive Pentagon operations.

Anthropic announced a revision to its Responsible Scaling Policy, replacing hard safety tripwires with more flexible risk reports and safety roadmaps. The change follows reports that Defense Secretary Pete Hegseth urged the company to grant the military unrestricted access to its Claude AI model, threatening penalties under the Defense Production Act. Anthropic’s leadership argued that strict halts on model training would no longer help anyone given the rapid pace of AI development. Critics warned the shift could erode safeguards and enable a gradual “frog‑boiling” of safety standards.

Policy Shift at Anthropic

Anthropic disclosed that it is modifying its Responsible Scaling Policy (RSP). Previously, the policy contained firm tripwires that halted model training unless specific safety guarantees could be met in advance. The new version adopts a more relative approach, introducing “Risk Reports” and “Frontier Safety Roadmaps” to provide public transparency instead of hard limits.

Rationale Behind the Change

The company said the adjustment stems from a “collective action problem” in a competitive AI landscape and concerns that an anti‑regulatory stance in the United States could leave the field less safe if some developers pause while others forge ahead without strong mitigations. Anthropic’s chief science officer, Jared Kaplan, told Time that the rapid advance of AI made unilateral commitments seem unhelpful, noting, "We felt that it wouldn't actually help anyone for us to stop training AI models."

Pentagon Pressure Reported

Concurrent with the policy announcement, Axios reported that Defense Secretary Pete Hegseth told Anthropic CEO Dario Amodei the company must give the military unfettered access to its Claude model by a set deadline or face penalties. Hegseth’s alleged threats included invoking the Defense Production Act, which could compel private firms to prioritize certain contracts for national defense, and potentially cutting Anthropic’s Pentagon contract while labeling the model a supply‑chain risk.

Implications for Military Use

Claude is reportedly the only AI model used for the Pentagon’s most sensitive work, with references to its involvement in a Venezuelan operation. A defense official emphasized the urgency of the technology, saying, "The only reason we're still talking to these people is we need them and we need them now." Anthropic has indicated willingness to adopt its usage policies for the Pentagon but refuses to allow the model to be used for mass surveillance of Americans or fully autonomous weapons.

Reactions From the AI Ethics Community

Chris Painter, director of the nonprofit METR, described the policy shift as both understandable and potentially ominous. He praised the focus on transparent risk reporting but warned that a more flexible RSP could lead to a “frog‑boiling” effect, where incremental rationalizations gradually erode safety standards. Painter noted that the change suggests Anthropic is moving into “triage mode” because current methods to assess and mitigate risk are lagging behind rapid capability growth.

Industry Context

Anthropic’s latest versions of Claude have received praise, especially for coding tasks. Earlier in the year, the company raised a large investment round, boosting its valuation to several hundred billion dollars, while a rival AI firm holds a valuation exceeding $800 billion. The policy revision reflects broader industry tension between rapid development, competitive pressure, and the desire to maintain robust safety safeguards.