Amazon Unveils AI-Powered Smart Glasses for Delivery Drivers

Key Points

- Amazon is developing AI‑powered smart glasses for delivery drivers.

- The glasses provide hands‑free scanning, navigation, and proof‑of‑delivery features.

- A vest‑mounted controller offers controls, a swappable battery, and an emergency button.

- Prescription and transitional lens options are supported.

- Trials are underway in North America with plans for broader deployment.

- Future updates may include real‑time defect detection and pet‑hazard awareness.

- Amazon also introduced a new warehouse robotic arm called “Blue Jay.”

- An AI analytics tool named “Eluna” was announced for warehouse operations.

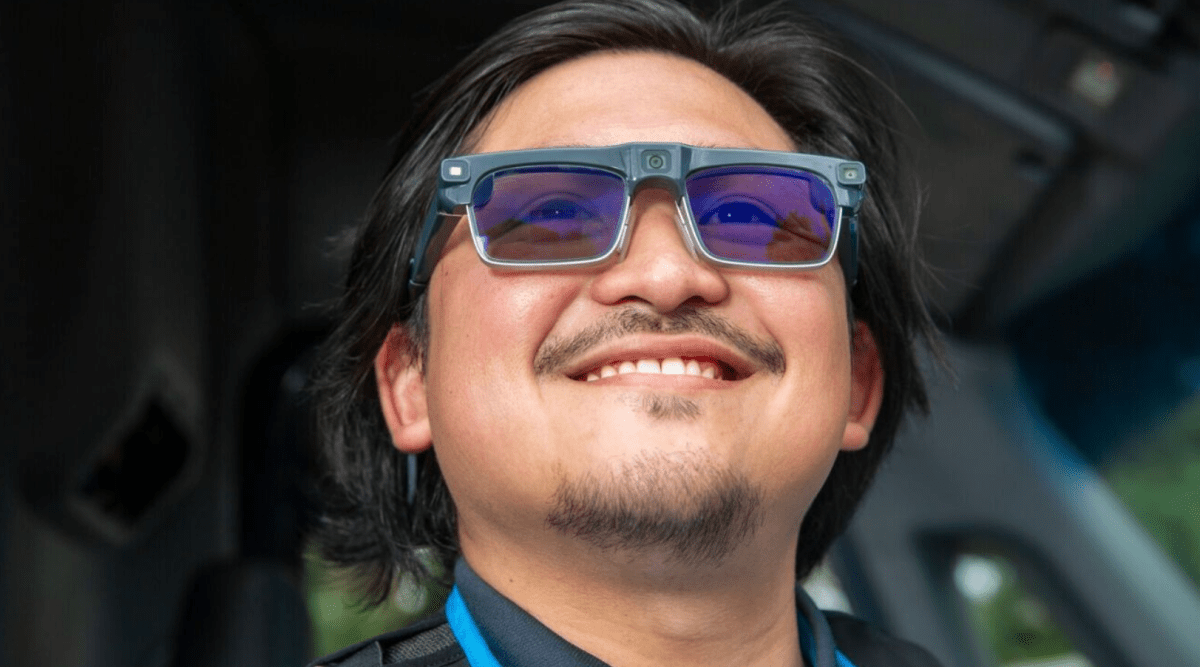

Amazon announced the development of AI‑enabled smart glasses designed to give its delivery drivers a hands‑free experience. The wearable technology combines computer‑vision cameras with real‑time sensing to let drivers scan packages, follow turn‑by‑turn directions, and capture proof of delivery without pulling out a phone. Paired with a vest‑mounted controller, the glasses also feature a swappable battery, emergency button, and options for prescription or transitional lenses. Amazon is trialing the devices with drivers in North America and plans to refine the system before a broader rollout, while also previewing related innovations such as a new robotic arm and an AI analytics tool.

Overview of Amazon’s New Wearable Technology

Amazon disclosed that it is creating AI‑driven smart glasses for its delivery workforce. The goal is to provide a hands‑free interface that reduces the need for drivers to switch attention between their phones, packages, and the surrounding environment. By integrating computer‑vision cameras and AI‑based sensing, the glasses display actionable information directly in the driver’s line of sight.

Key Functionalities

The glasses enable drivers to scan packages and receive turn‑by‑turn walking directions without handling a separate device. They also automatically capture proof of delivery, allowing for documentation without manual photo taking. The visual overlay can highlight hazards, delivery tasks, and navigation cues, helping drivers locate packages inside the vehicle and guide them to the correct address, even in multi‑unit apartments or business complexes.

Hardware and Design Elements

Each pair of glasses is paired with a controller that attaches to the delivery vest. The controller houses operational controls, a swappable battery, and a dedicated emergency button. The eyewear is compatible with prescription lenses and transitional lenses that adjust to changing light conditions, ensuring comfort and usability across varied delivery settings.

Deployment Strategy

Amazon is currently testing the smart glasses with delivery drivers in North America. The company indicated that the technology will be refined based on trial feedback before a wider rollout. This cautious approach follows earlier reporting that Amazon had been working on similar wearable solutions.

Future Capabilities and Related Innovations

Future updates aim to introduce real‑time defect detection, alerting drivers if a package is delivered to the wrong address. Additional sensors could detect pets in yards and adapt to low‑light hazards. Alongside the glasses, Amazon also revealed a new robotic arm named “Blue Jay,” designed to assist warehouse employees, and an AI tool called “Eluna” that will provide operational insights within its fulfillment centers.